Securing Society 5.0

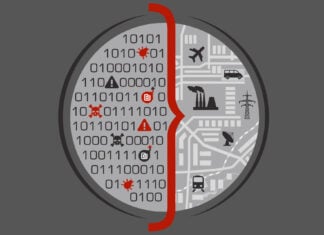

Society 5.0 is a concept that envisions a new form of society that integrates advanced technologies such as artificial intelligence (AI), the internet of things (IoT), robotics, blockchain, metaverse, big data, and others into all aspects of life.

In Society 5.0, technology is used not only for economic growth (Industry 4.0) but also for solving social issues such as aging population, environmental degradation, climate change, health and mental health, urbanization, crime, and others. Society 5.0 aims to create a human-centric society where technology is used to enhance people’s quality of life and promote well-being.

This concept is being promoted by the Japanese government and has gained global attention as a model for the next generation of societies.

Learn more: Will 5G and Society 5.0 Mark a New Era in Human Evolution?

For over 30 years, I have been safeguarding lives, well-being, the environment, and businesses by integrating cybersecurity, cyber-physical systems security, operational resilience, and functional safety approaches. I have held multiple CTO, CISO, and emerging technology risk management leadership roles for various global organizations.

For over 30 years, I have been safeguarding lives, well-being, the environment, and businesses by integrating cybersecurity, cyber-physical systems security, operational resilience, and functional safety approaches. I have held multiple CTO, CISO, and emerging technology risk management leadership roles for various global organizations.

My Defence.AI Website

My Defence.AI Website